THE INSTITUTE FOR AI

MEASUREMENT SCIENCE

OPEN MEASUREMENT INFRASTRUCTURE FOR AI EVIDENCE GENERATION.

TRANSLATING AMBIGUOUS DOCUMENTS TO TECHNICAL EXECUTABLES.

All artifacts described are planned deliverables, not operational tools.

APPROACH

The following describes our intended approach, with reference implementations planned as part of our roadmap phases.

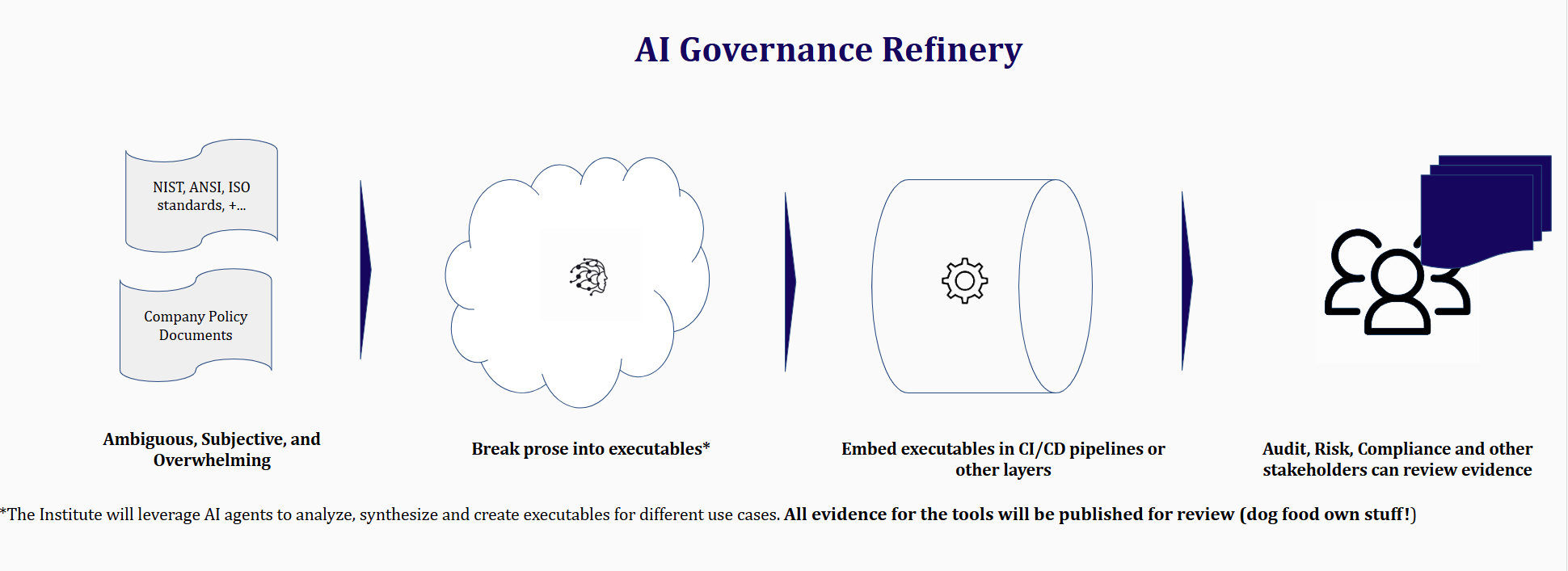

Innovation shouldn't be smothered by governance. Today, we are asking our brightest engineers to navigate a sea of subjective prose rather than objective code. We fix the translation gap.

The Problem: Numerous documentation heavy policies (NIST, ISO, company policies).

The Process: AI Governance refinery (Breaking prose into executables).

The Result: Unified Evidence Manifest (Facts for humans to review).

The machine provides the facts; the human provides the wisdom. We automate the audit, not the accountability

IAIMS does not represent, certify, or officially interpret any external framework. We provide reference mappings for community review.

The Scientific Method

Our selection process for evidence collectors is based on repeatability, observability, and peer-reviewed safety standards.

Conflict of Interest Policy

IAIMS maintains a strict firewall between its technical artifacts and any third-party auditing services to ensure the neutrality of our measurement science.

AI Governance Refinery

Our engine will synthesize global frameworks (NIST, ISO, EU AI Act) into open-source, executable evidence collectors.

The Executable Library

We treat AI governance evidence as a measurable object with properties such as: - Defined scope and limits - Explicit assumptions and uncertainty - Versioning and change sensitivity - Auditability and traceability Standardized, audit-ready scripts that turn requirements into technical deliverables.

How We Fit In

The Institute operates alongside; but does not duplicate other efforts from standard setting groups or regulators. While traditional players collect existing documents, we operate as the Public Logic Layer. We are defining the tools that will collect useful evidence, ensuring the audit trail is transparent. IAIMS does not interpret policy, make regulatory judgements, or issue compliance determinations. It provides technical evidence collection mechanisms that others may integrate into their own evaluation processes.

Governance and Independence

The Institute for AI Measurement Science is an independent, nonprofit organization. We operate under transparent technical governance, public conflict of interest policies and explicit separation between measurement and judgement. Neutrality is essential to trust in measurement.

REQUIREMENTS → RUNNABLE

From prose to deliverable: concrete examples of the translation

Hypothetical examples of future mapping concepts (not yet implemented):

ARTIFACTS FOR

REAL OVERSIGHT

AI Bills of Materials

Machine-readable inventories of models, dependencies, and versions for transparent governance.

Evidence Schemas & Formats

Structured representations that map internal evaluations to regulatory frameworks.

Versioned Measurement Procedures

Repeatable, documented methods supporting comparability and auditability.

Reference Implementations

Non-prescriptive reference implementations for interoperability

Open, Standards-Aligned Infrastructure

Non-proprietary artifacts designed for interoperability and reuse across organizations.

BUILT FOR YOUR WORKFLOW

Open source means freedom. No vendor lock-in, no black boxes, no hidden logic.

Zero Lock-in

Avoid 'Compliance Lock-in.' With our planned open-source evidence collectors, your governance logic will remain portable. You won't lose your audit history if you switch vendors.

Interoperability

Do it once, use it everywhere. Our tools will translate internal telemetry into formats mapped to multiple frameworks (NIST, ISO, EU AI Act), eliminating redundant work.

CI/CD Integration

Governance at the speed of code. Our open scripts will slot directly into your existing pipelines, making safety a release gate, not a manual bottleneck.

WHAT WE DO NOT DO

No Certifications

Certify or approve AI systems

No Determinations

Issue compliance or safety determinations

No Rankings

Score, rank or endorse models or organizations

No Commercial Services

We do not sell software or consulting

No Endorsements

We do not score, rank, or endorse organizations

No Policy Advocacy

We do not advocate for specific laws or policies

WHY MEASUREMENT MATTERS NOW

Measurement infrastructure reduces friction while strengthening oversight.

Let's consider a few scenarios:

Enterprise scenario: A global bank's AI documentation fails auditors because evidence is inconsistent across releases.

Cross-framework scenario: A vendor's compliance package works for ISO but fails to map to NIST; auditors then need to re-interpret.

Portable evidence packs are the path forward.

Enforcement is Real

Regulations such as the EU AI Act and expanding U.S. state activity now require technical evidence.

Complexity Threshold Crossed

Autonomous agents and continuous updates break static documentation and one-time audits

Evidence Fatigue is Widespread

The Grace Period is Over. AI without automated governance is commercially unviable due to regulatory friction. Standardization reduces the resource burden of compliance.

RESEARCH

&

INSIGHTS

Deep dives into the science and practice of AI measurement. Expert perspectives on building reproducible measurement infrastructure.

Content in development — initial posts coming soon.

AI Safety Measurement

Methods for reproducible AI measurement.

Regulatory Frameworks

Mapping evidence artifacts to diverse frameworks without judgement.

Evidence Formats & Protocols

Standardized formats and protocols for evidence collection and integration.

Technical Artifact Formats & Schemas

Structural definitions for interoperable measurement artifacts.

Integration Guidance

Practical approaches to integrating measurement artifacts into existing workflows.

Open Methodology

Transparent, versioned, and reproducible measurement protocols for the community.

CONNECT WITH THE INSTITUTE

Our Goal: Map 100% of AI frameworks to automated evidence collectors. Building a world where AI safety is proven by data, not claimed by marketing.

Have a question or need details? Fill out the form and we'll respond promptly.